Over the last several months I’ve been searching for the ultimate learning algorithm that could reliably learn strategic plays in adversarial, sparse reward settings like foosball, air hockey and table tennis as part of an ongoing development of Infinite MR Arcade. One of my many Frankenstein architectures is a “tabular” Q learning with neural encoded latent integer state representation, which can surprisingly reach optimal solution to LunarLander-V3 under 30K steps and sometimes even under 10K steps (obviously dense reward, won’t lie about that), although I’ve once again hit a brick wall with issues of stability possibly stemming from state representation drift.

While trying to address stability issues this week by experimenting with various loss functions, I may have procrastinated a little bit by trying out a lot of quirky stuff, but fortunately it led to some very interesting finds that I am showing off today. One of those discoveries is that when you directly optimize position vectors by minimizing their total sum over pairwise euclidean distances (L2 norm), all points stabilize into a perfectly spherical geometry that flickers between two almost absolute configurations, only drifting slightly over time, which almost sounds like a contradiction or a bug since, intuitively, minimizing distance between every pair of points should collapse the entire set into a single point. I’ve initialized the points with Gaussian distribution as well as uniform distribution, yet they all stabilize into and resonate at a spherical configuration nonetheless (the z axis was scaled wrong in the videos hence the oval shape, but it’s really a perfect sphere):

observations = nn.Parameter(torch.randn(samples, 3) * 20).requires_grad_(True)

#observations = nn.Parameter((2*torch.rand(samples, 3)-1) * 20).requires_grad_(True)

optimizer = torch.optim.SGD([observations], lr=1e-3)

for _ in range(N):

optimizer.zero_grad()

obs_distances = torch.cdist(observations, observations, p=2.0)

mask = torch.triu(torch.ones(samples, samples), diagonal=1)

loss = torch.sum(mask * obs_distances)

loss.backward()

optimizer.step()

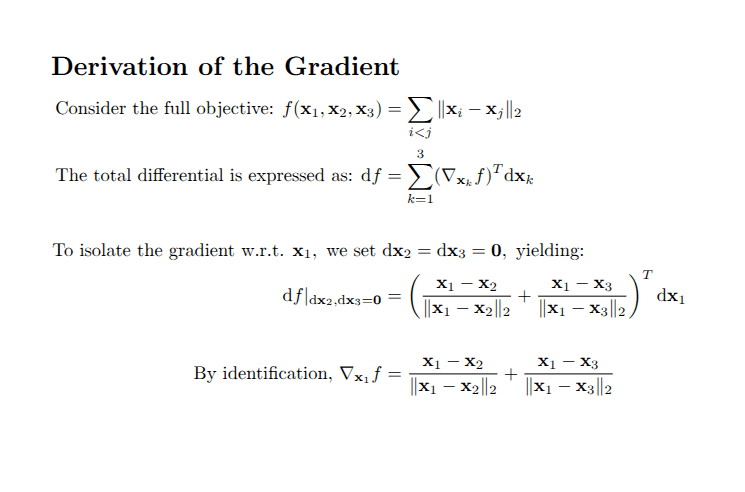

The switch between two configurations is somewhat easily explained. Consider 3 position vectors x1, x2 and x3 approximately forming an equilateral triangle:

Because the partial gradient of the objective with respect to vector x1 is a sum of two unit vectors, one in the direction from x2 to x1 and the other in the direction from x3 to x1, the negative gradient with respect to x1 points towards the opposite side from point x1. By symmetry, the negative gradients with respect to x2 and x3 would also be facing their respective opposite sides. Thus, once the distances among the points converge to something smaller than the learning rate, at the next iteration, the points would literally flip to the other side. Most importantly, note that since we started with approximately an equilateral triangle, on the next iteration, the points would flip back to approximately the same positions as the previous iteration.

As for how it always converges to a sphere, it’s a little less clear, but the intuition is that whatever gap exists between the points that is greater than the distance proportional to the unit learning rate will tend to close until all points are as compactly placed near each other and unit vector gradients of a point cancel each other out as much as possible. I am guessing that happens to be a hollow sphere with some points interspersed within it. However, I am wondering why it can’t just be a lattice.

Another interesting thing I tried out was using several objectives inspired by chemistry and physics, and the result is arguably more significant or interesting than the previous. Basically, I had three sets of vectors corresponding to electrons, protons and neutrons. I had electrons repel itself and protons also repel itself while the two attracted each other, just like in normal physics. I additionally had neutrons “attract” both electrons and protons while repelling itself, kind of like strong nuclear force, hoping that the neutron would serve as a stabilizing source of structure to bond the three elements.

The results? Polymerization (visualizing neutrons):

electrons = nn.Parameter(torch.randn(samples, 3, device=device) * 20).requires_grad_(True)

protons = nn.Parameter(torch.randn(samples, 3, device=device) * 20).requires_grad_(True)

neutrons = nn.Parameter(torch.randn(samples, 3, device=device) * 20).requires_grad_(True)

optimizer = torch.optim.SGD([electrons], lr=1e-4)

optimizer_1 = torch.optim.SGD([protons], lr=1e-4)

optimizer_2 = torch.optim.SGD([neutrons], lr=1e-4)

for i in range(N):

optimizer.zero_grad()

optimizer_1.zero_grad()

optimizer_2.zero_grad()

electron_distances = torch.cdist(electrons, electrons, p=2.0).to(device) #+ 1e-6

loss = -torch.sum(mask * electron_distances / electron_distances.detach()**2).to(device)

proton_distances = torch.cdist(protons, protons, p=2.0).to(device) #+ 1e-6

loss_1 = -torch.sum(mask * proton_distances / proton_distances.detach()**2).to(device)

neutron_distances = torch.cdist(neutrons, neutrons, p=2.0).to(device) #+ 1e-6

loss_2 = - torch.sum(mask * neutron_distances).to(device)

cross_distances = torch.cdist(electrons, protons, p=2.0).to(device)

loss_3 = torch.sum(mask * cross_distances).to(device)

cross_distances_1 = torch.cdist(neutrons, electrons, p=2.0).to(device)

loss_4 = torch.sum(mask * cross_distances_1).to(device)

cross_distances_2 = torch.cdist(neutrons, protons, p=2.0).to(device)

loss_5 = torch.sum(mask * cross_distances_2).to(device)

sum([loss,loss_1,loss_2,loss_3,loss_4,loss_5]).backward()

optimizer.step()

optimizer_1.step()

optimizer_2.step()

The video shows neutron particles self organizing in a helical polymer where new particles only append to one of the distal ends. Although it’s not shown in the video, the protons form a cloud around the neutrons most heavily concentrated near the earlier distal end creating some form of a protective shield from additional growth.

All in all, I was just very excited to see something akin to molecular physics without a supercomputer. Currently trying out various other things, so I’m hoping to share more as time goes on!

Leave a comment